We recently ran into issues with Broadcom (QLogic) network cards on a few servers. Turning Jumbo Frames on to increase capacity allowing 9,000 bytes instead of the default 1,500 bytes actually caused us to experience a dramatic reduction in overall capacity and bandwidth. I used a program called iperf to do bandwidth testing.

With iperf we can fire up the daemon (service / exe running into memory) then fire it up on a client to connect and do a sample download from point to point.

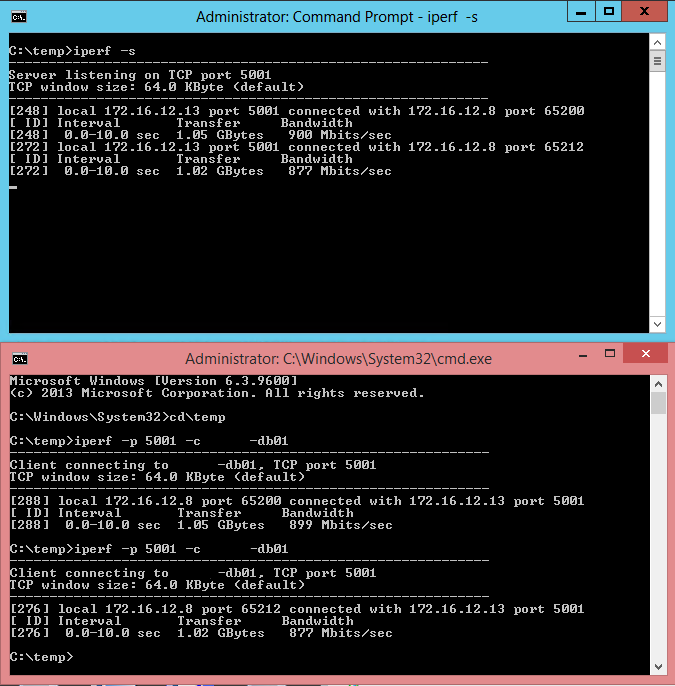

Server: iperf -s

Client: iperf -p 5001 -c yourhost_or_IP_here

iperf will then do a test download and let you know what your real world bandwidth is. Below is an actual test we did with Intel NICs, there were two NICs in a “team” in a load balancing configuration.

I ran a few tests and in the client (reddish bordered) command prompt I redacted the actual host name. The command is “iperf -p 5001 -c myhost-db01 > then you hit enter and it runs. The first bandwidth result in 10 seconds we downloaded 1.05 GB in the default 10 second test at 899 Megabits per second. On a gigabit port your theoretical max throughput is 1000 Mbits/sec (megabits per second). This test was during high utilization and well within spec.

What’s this all mean ultimately? If you’re application isn’t performing well and you have plenty of bandwidth like the above test that you need to look elsewhere for application performance issues.

- It might be any number of things like:

- Antivirus scanning (you can make an application exception for your applications)

- Drive / folder scanning (you can make folder and drive exceptions for your applications)

- Processing power on the PC, how much horsepower does it have?

- Memory consumption, do you have enough RAM?

- Local disk I/O (In / Out operations or “read / write”), switching to a solid state disk would help

Is it on the server side? Perhaps there are I/O problems, memory utiliztion issues, perhaps it’s on the application side and the vendor (or you) need to re-examine the code base and optimize it for performance, etc, etc…there is a long potential list!

iperf also has many more options like packet size so you can fully test your Jumbo Frame settings. For now, to fix the Broadcom issue we NIC teamed two NICs and we currently have the standard 1500 MTU (Maximum Transmission Units) until we can figure out what the magic settings is to get it working properly. This is a well known problem online but nobody seems to have a definitive answer…perhaps we’ll find it! If we do…we’ll post an update.

So far we’ve:

- Updated Firmware using the Dell OMSA LIVE updater disk

- Updated drivers

- Turned off TOE and a few other options

- …so far nada but because it’s a production server we can’t fiddle with it during busy hours. With the 1500 MTU team things are adequate until we get the next opportunity to resolve this issue.

If you’re having performance problems we can help!

800-864-9497

[si-contact-form form=’1′]

One Response